About the project

Impact

Reduced time assessing new RFP's by upwards of 80%

Decreased cognitive load by using plain language

Better quality submissions due to improved clarity of RFP project goals

U.S. Digital Response (USDR) and the National Association of State CIOs (NASCIO) conducted a two-month user research project to understand how technology vendors evaluate incoming solicitations or Requests for Proposals (RFPs).

Based on feedback, we proposed changes to solicitation templates to make them easier for vendors to assess and evaluate

USDR + NASCIO

Potential Impact

Various states adopting our designed solution

Enabled national organization to expand summary sheet research across additional procurement types

Role on team

I was 1 of 2 UX Researchers and was also the team's Designer. The rest of the team included our wonderful Project Manager and USDR Lead/Procurement Expert

Research Objectives

Understand how front-line government technology vendors determine if an RFP is worth a deeper review & how they receive RFPs

Understand the key information vendors seek when they first review a new RFP

Identify pain points and solutions to make the RFP review process easier

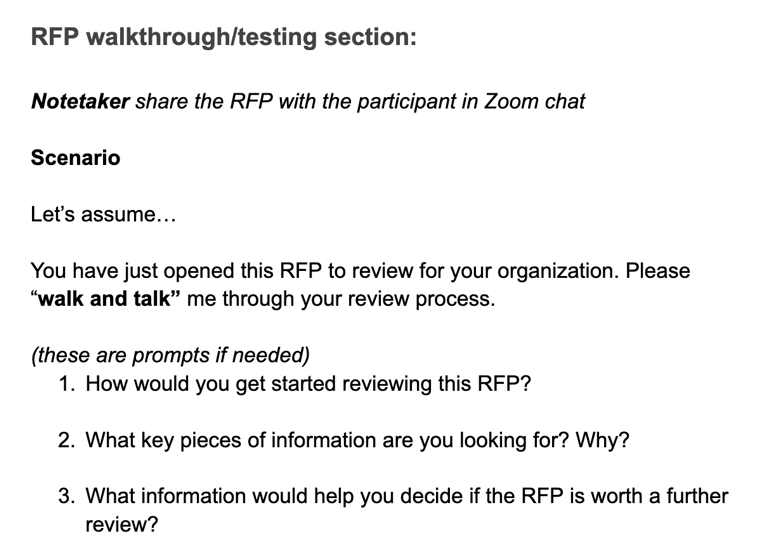

Our Research was split up into 3 phases. Starting with a survey of NASCIO Corporate Members that our team collaborated on. Following that we scheduled User Interviews that we split into two sections with a Usability test asking the participants to "Walk and Talk" us through their review process. Informed by the previous rounds of research, we applied what learned to create an additional element and scheduled an additional round of User Interviews to also test our concept.

Survey

Interview & Usability Test

Interview & Concept Test

Survey results

NASCIO closed the survey after two weeks with 34 responses. Following that I created a FigJam to affinity map open ended questions and identify themes.

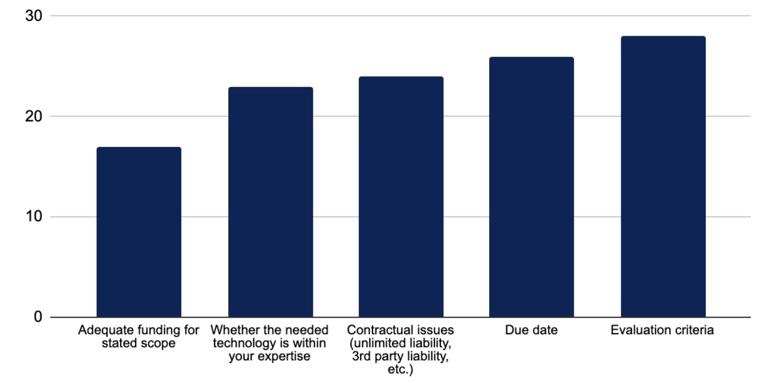

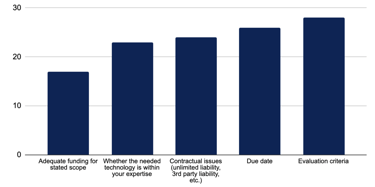

Q:11 When quickly scanning a new solicitation, what do you look for to determine if it's right for your organization? (Top 5)

Q15: What information would you like to be included within a cover letter or summary? (Top 5)

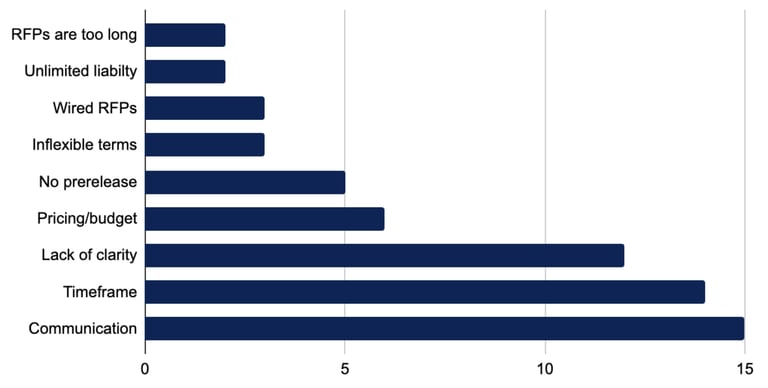

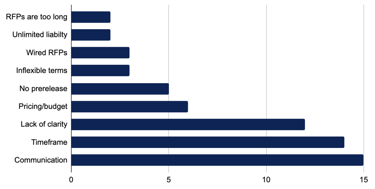

Q18: What's your biggest pain point in the RFP process with state government?

Strategic goal/Scope: “the why”

Timeframe

Budget

Mandatory requirements

Selection criteria

User Interviews / Usability test

Methodology: Remote moderated user interview and usability testing with 4 new participants via Zoom

Goals: Understand vendor's current experience reviewing state-issued RFPs through user interview. Usability test will help us observe how vendors actually review an RFP and what information might be helpful to review faster.

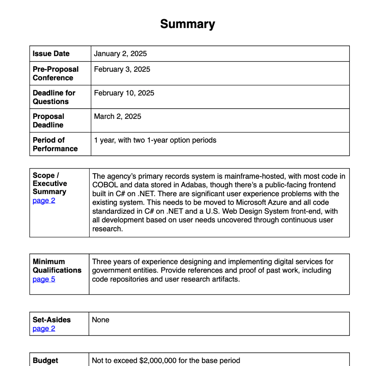

Materials: Actual RFP, selected to be a standard representation of customs software projects, something all our vendors would be familiar with.

Participants: Mix of NASCIO corporate members and small to large business tech vendors

Analysis: Recorded sessions analyzed in Dovetail for patterns and specific feedback

What we learned

All of our participants were the first to review RFPs for their organization and are regularly engaging with RFPs. Their evaluation processes were similar to each other:

All participants initially scan the doc for key info

Some reviewers use AI to summarize RFPs

Some use “control-F” to search for key terms

If an RFP looks promising…

Some reviewers use an internal “decision tool” to score RFPs

Reviewers can spend hours reading the entire RFP

Across all the interviews, participants consistently identified the following as crucial elements they look for:

Those crucial elements were also observed during the usability test section of the session:

Clear problem and scope of work

Submission criteria and requirements

Budget information

Project timeline/deadlines

Minimum qualifications

Submission criteria (4 out of 4 vendors mentioned)

Qualifications (3/4)

Budget (3/4)

Scope (3/4)

“[It’s] time consuming just to… understand what the structure is and where to look for certain information.”

Vendors mentioned that simple changes in formatting, such as increasing font size and providing scannable content, could have a meaningful impact on the ease of reviewing RFPs.

- Interview participant

Applying the research

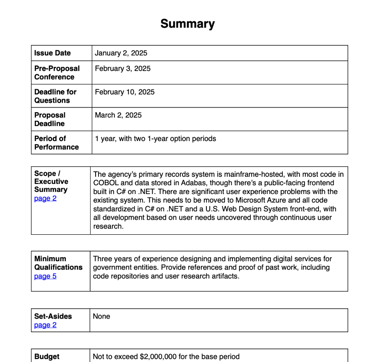

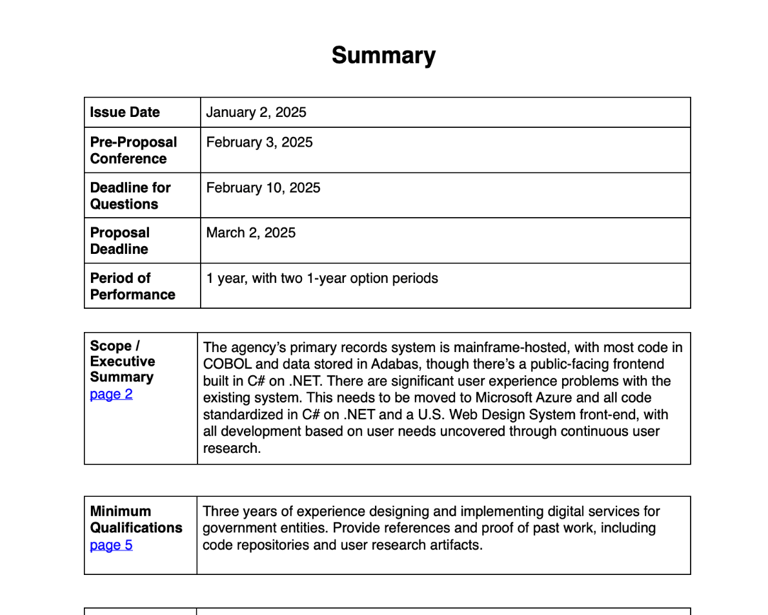

Following the interviews, we analyzed the data to inform a potential solution. I designed a standardized summary page that can be added to any RFP, centralizing the most sought-after information informed by the survey, user interviews, and usability test.

This approach reduces the burden on reviewers by eliminating the need to search through lengthy documents and decipher complex language.

User Interviews / Concept test

In our last phase of this research we wanted to test our concept and observe how people would interact with the summary page. We started sessions again with a set of questions to understand this groups process reviewing RFPs.

Methodology: Remote moderated user interview and concept testing with 4 new participants via Zoom

Goals: Understand vendor's current experience reviewing state-issued RFPs through user interview. Concept test will help us learn if our summary is useful for reviewers and what information would be helpful for them.

Materials: Actual RFP with our summary page included

Participants: Mix of NASCIO corporate members and small to large business tech vendors

Analysis: Recorded sessions analyzed in Dovetail for patterns and specific feedback

What we learned - Round 2

During the second interview phase, participants demonstrated the same RFP review behaviors and information-seeking patterns we had identified in both the survey and first round interviews.

A table of contents with clear labeling.

Separate sections for procurement rules and for project scope - for clarity.

A checklist to help manage the submission criteria.

More clarity around the current state and the future state of a project.

5/5

How easy is it to find the info you were looking for?

On a scale: 1 - 5 with 1 = very hard and 5 = very easy

Similar suggestions for improving state issued RFPs

Perfect score this round! We asked both usability and concept testers:

Previous score was 3.4/5

Vendors mentioned other important details that could be helpful:

For technology vendors, our additional summary page could positively impact:

Evaluation criteria

Contact information for the Procurement Officer

Preferred or existing tech stack

Key personnel requirements

Any specific certifications, etc.

Bid/no bid decision time

Number of appropriate bids

Provide a level of consistency across states (that would be easy to implement)

All participants could quickly locate the essential information they needed to make bid/no-bid decisions. The summary page eliminated the frustrating hunt through dense documents that we'd observed in earlier testing.

Continuing the Conversation

What impact do summary sheets have on proposals and resulting contracts?

And measuring that impact?

Understand the states’ RFP writing process

Who is writing RFPs? Do they have specialization for the industries they are writing for?

What other industries would benefit from summary sheets, and what should be included in those?

Update: currently being researched by a National organization!

Potential areas to explore more:

Our work was really just the start for more conversations and research about government written RFPs.

The research was published!

US Digital Response and NASCIO published a report based on our team's research in February 2025.

From Research to Real-World Impact

Following the report publication, our research gained some momentum across government procurement communities. The National Association of State Procurement Officers (NASPO) invited us to present our findings in collaboration with NASCIO, where the USDR Lead and I spoke to 125 state procurement officers and staff.

The impact continued to grow! The USDR Lead and I were subsequently asked to co-present to the InnovateUS community with a similar audience of 125. And our USDR Lead has since been invited to speak with additional organizations.

Most rewarding of all, we've begun spotting our summary page template being used in actual state RFPs, showing that our research has moved from insight to implementation.